Introduction

Web scraping, web harvesting or web data extraction. is the process of extracting public data from the web so that it can be used for some purpose.

When browsing websites with protections such as rate-limits and DDOS protection and sites that force users to login such as Instagram, LinkedIn, and many others — there’s a likelihood of getting blocked while scraping. That’s typically when developers start wrestling with proxies and chrome rendering in an attempt to simulate users. This is bad because:

Increased overhead — typically, an engineering organization has business goals they’re striving towards, and are likely to dedicate engineering hours to core product development rather than building tooling. Using a service is more economical from all perspectives. There is also ongoing maintenance required to ensure that reliability is maintained.

Lack of expertise — the difficulty isn’t in scraping as much as it is with accessing the data and content they’re after. Data is a closely guarded asset in this era, and platforms charge tens of thousands for access to their APIs, some don’t even have APIs available for developers to access.

ScrapeOwl is a done-for-you scraping API for developers that ensures you always have access to the web data you’re trying to scrape. For the rest of this introduction, we will take a look at some practical applications of web scraping, and how to get started with ScrapeOwl to scrape the web.

Practical Applications: What is web scraping used for?

Web scraping is used for many things from monitoring weather changes, monitoring real-time data, tracking financial data to fetching product data. Each business or individual gathers data for their own reasons, some examples are:

-

Real-time analytics — web pages nowadays are subject to frequent format modifications which require real-time or near real-time data scraping for analytics. This can be helped by using API to crawl the websites. API crawls the required information at a very high frequency and speed. This gives real-time data for analytics.

-

Competitive Product Data Monitoring — e-commerce businesses and online shops compete against thousands of products having the same features and descriptions. Third-party sellers on Amazon, eBay, AliExpress etc need to determine product ranking, analyze product reviews and evaluate offers to optimize their offerings. Web crawling automates the process by helping businesses to monitor all the competitor’s data effectively.

-

Predictive Analysis — the integration of web scraping and predictive analytics are used to make the marketing process efficient. Predictive analysis is one of the most important tools in business today. It’s all about forecasting what the probabilities are by analyzing existing data in order to predict the future patterns, and we help you obtain the data you need. Predictive analysis can’t accurately predict the future, but it can give you a good picture of what the possibilities are.

-

Job Boards — finding a new job is a bit hectic. An endless list of jobs, applying and never hearing back from recruiters is sometimes equivalent to wasting your valuable time but web scraping could manage this for you. A scraper could search for the jobs according to the requirements given, scrape the important data listed under the postings and filter out the inaccurate results. Another potential application is curating job posts across an industry or role to create a niche a job board.

-

Search Engine Results — web scrapers works best with public data available on the internet and can be trained to scrape the URL’s from a website or a search result. It could be a simple blog or some complex Google or DuckDuckGo search result through you want to pull all the URL’s from a particular keyword for some stuff. Not only that, but a web scraping script could also scrape all the images available on the Google search result within a matter of time.

Advantages of using Scrape Owl for Web Scraping.

- Easy to integrate

- Cost and maintenance

- Reliability

Easy to integrate:

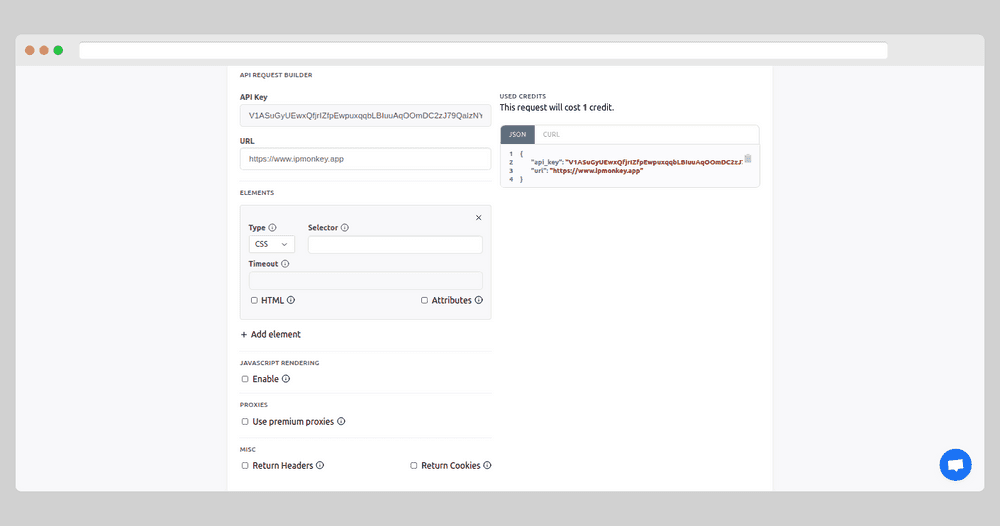

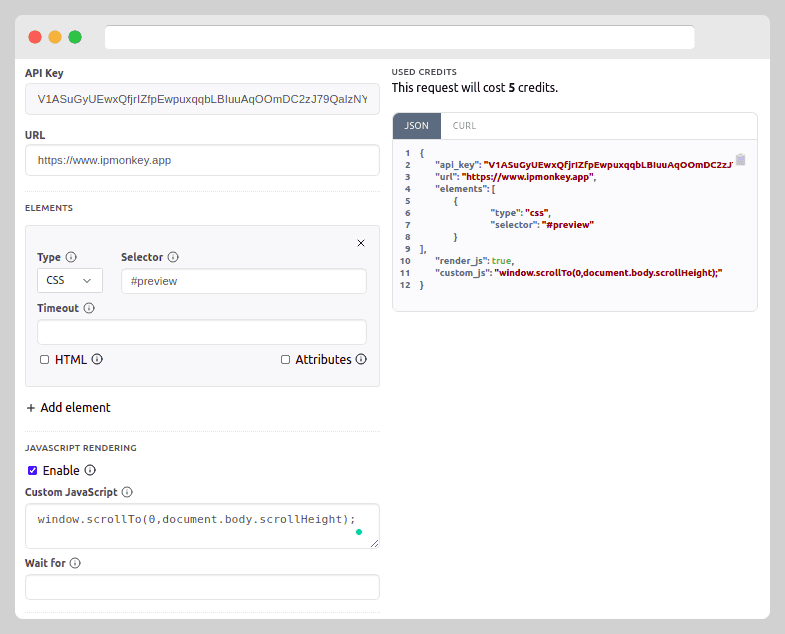

ScrapeOwl provides a user-friendly API with features like JavaScript rendering and premium proxies, which are superior to data centre proxies that are easily blocked — we even allow you to set a country as a source for the request IP address to access locale-specific content. In addition to accessing your API key and your scraping statistics, our dashboard comes with a request builder to build JSON and CURL previews of the requests to our API code before you execute it.

Cost and maintenance:

When it comes to gathering and scraping data, you can have an in-house scraping team or use web scraping providers like ScrapeOwl. An in-house team is more expensive and less feasible due to infrastructure maintenance, proxy and server costs.

Scraping service providers like ScrapeOwl will free your time for the core business activities. Premium proxies, JavaScript rendering and ready to use data helps all sizes of teams to grow the business.

Reliability:

ScrapeOwl is developed to meet the increasing demands and priorities of the consumers and developers. ScrapeOwl provides a simple dashboard access interface. Also, ScrapeOwl API services are not only fast, but they are also highly reliable. At ScrapeOwl we have an average 99% success rate and a highly professional support team to solve your problems and make your life easier.

Getting Started with ScrapeOwl

ScrapeOwl is a complete web scraping solution for developers. Sign up at scrapeowl.com for a free trial with no credit card required. The free starting plan comes up with 1000 credits, along with tools like JavaScript rendering, premium proxies and all other paid version features.

You can start building your request in the API Request builder section before sending it out to understand the API parameters.

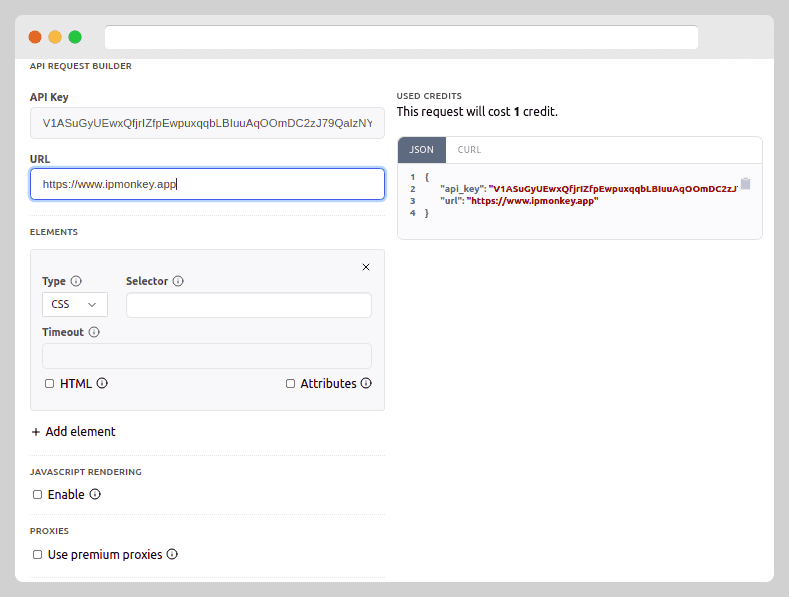

Provide the URL of the website.

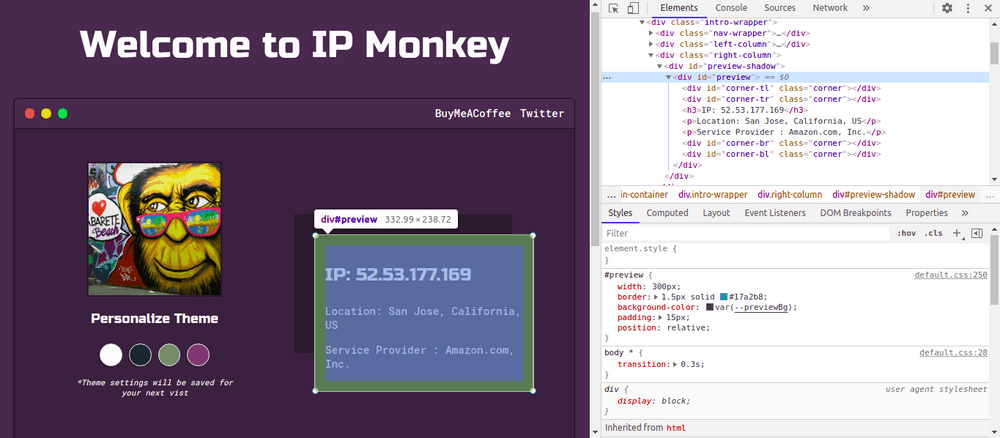

For this tutorial and learning purposes, I’ll scrap the website IPMonkey (ipmonkey.app). Start building the request by providing the URL in URL section of the API request builder.

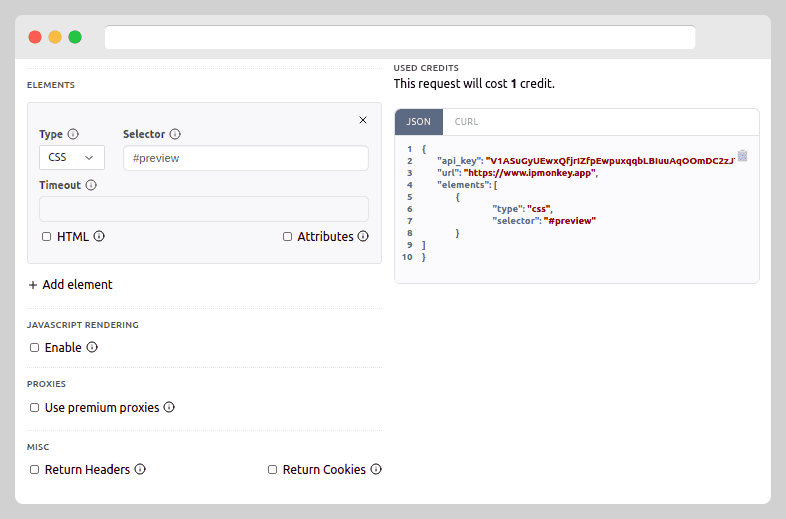

Provide the CSS selector or XPath of the element you want to parse.

I want to scrape the IP address information embedded under the preview class of the website. To do so, I change the type of the elements to CSS and input CSS class name #preview in Selector.

Note: If you want to scrape the complete page, keep the Selector empty.

JavaScript Rendering

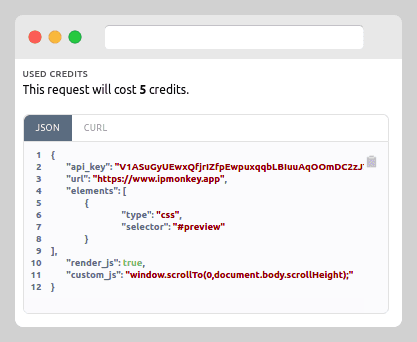

Some websites including IP Monkey are powered by JavaScript and original data could only be obtained after the rendering process. To successfully scrape the website, turn on the JavaScript rendering and put the javascript code inside Custom JavaScript. The JavaScript needs to be executed on the page’s console before capturing the content. The JavaScript rendering request can cost up to 5 credits per request, so it is advised to use this feature if necessary.

Meanwhile, on the right, you can find your JSON and CURL request ready to be used. Simply copy-paste the system generated JSON to your Python, Ruby, Node JS and PHP code to immediately start working with API.

Not only that, but you can also use Premium Proxies for a more refined experience on ScrapeOwl.

Summing it Up

I’ve shown how you can easily create a web scraping request to extract data from complex websites and web apps in minutes. In the next blog post in this series, I’ll show how you can scrap an e-commerce platform like Amazon with the help of ScrapeOwl. Whether it be scraping websites related to information or product scraping, ScrapeOwl is the place to start.

ScrapeOwl is the leading API service provider, ScrapeOwl offers premium quality scrape & grab data, extracting data from any web page using a simple API request.